In the World of Grains – Part 6

In the World of Grains – Part 6

(contains embedded video) If you want to support my work, please make use of the "PayPal" button - thank you very much indeed!

A First Attempt to

Classify and to Systemise Approaches to

Using Sonic Grains in

Music and Audible Art

(excerpt from my book: https://www.dev.rofilm-media.net/node/332)

When I planned this book and its individual chapters, I had thought to write - here in chapter 3 - about different practical ways of applying sonic grains to the processes of producing music, and of composing music, sound, and audible art. But soon I was to learn, what a mess and what a mayhem we come upon, outside there in the www, the wild world of wonderous conceptions of the matter of sonic grains.

Things get even more complicated because of the conflict between the agents of well planned compositions, seeking deeper sense and meaning, and aiming for rather intellectual pleasure on one side, and the ambassadors of mere sensual enjoyment, who are permanently endangered to get lost in delirious orgies of – quite often – shallow sounds on the opposite side. A conflict beloved, fostered and cultivated by both parties – and that for a quite long time. It´s nowhere else than on the field of sonic grains and their applications where this conflict is fought out most virulently. This factum might not occur to everybody, because one of the strategies – followed by both sides – is a kind of “actively ignoring” each other.

These are the reasons why I decided to add this new chapter 3 to the plan of my book. Let me start doing, what the headline of this chapter is asking me to do. And perhaps you will find something in this new chapter 3, something that may well pacify even the mentioned conflict.

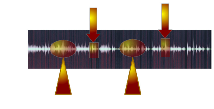

1. We can take a given sound, a given sample, which is available to our granular sound processor in its entirety, and extract pieces of grain-sized (milliseconds) snippets from this sound. The process of extracting may or may not follow certain predefined compositional rules.

2. We can generate grain-sized snippets of sound “from nothing” using electronic instruments, or coding techniques (see chapter 2). And again we may or may not follow predefined compositional, musical rules by doing so.

3. In case we´d rather go the way of extracting from existing sound mentioned in number 1, we can use real-time or non-real-time processing of live sound (instead of rather short samples, which are completely loaded in our granular sound processor software). E.g. musicians playing their instruments, or a tape or another kind of recorder, or the sequencer of our DAWs etc. playing something. And again and again: the way our “grainmachine” treats the live sound my or may not be more or less predefined. And it´s here, where we are highly endangered to succumb to the temptation of leaving the region of art and degrading ourselves and the idea of sonic grains to just producing some chichi effects.

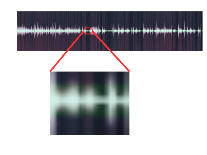

4. Or we can do something completely different: we can investigate real-world sound (including the sounds of musical instruments) down to its atomic-sized only millisecond(s) lasting sonic content, either to gain new sounds, new playable virtual instruments, or to get a deeper insight in the investigated (macroscopic) sound – or both.

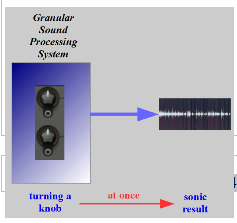

Please don´t confuse the terms “live sound” and “real-time”. Whereas “real-time” means an immediate/fast response of the granular sound processing equipment/setup to my actions (I move a slider, and get an immediate result, or I change a tiny bit of code, and the software reacts immediately), the term “live sound” means there is someone/something producing sound outside of the granular processing system.

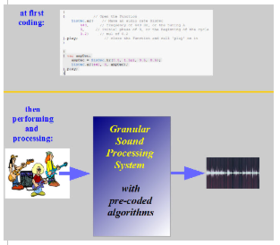

“Non-real-time” processing of live sound means, that I set the rules of how the (later) live sound shall be treated in a compositional act, before the performance (or the recorded piece, or the sequencer of my DAW etc.) starts. I do this e.g. by coding my composition (writing a computer program) – or at least parts of it.

All granular sound processing applications like VSTs, AUs etc. work real-time, no matter, if or not they can be used to process live sound. If they cannot, we have to load the complete sound we want to process into the application, where it stays static, while we are doing our granular manipulations. If they can, the application is more like a tunnel, which the processed live sound streams through, and from the tunnel´s walls it is being turtured by the granular functions the application has on offer.

Anyway, now that we have generated our grains one way or the other, we have to decide how to handle them. In the case of live sound and real-time processing the answer lies in the prescriptions of the process (of generating the grains) itself, because there is only a very limited possibility to take a grain, or a cluster of grains, and rearrange them or let them undergo further sonic processings. The way the live sound is treated by our granular sound processor in real-time is more (or perhaps only a tiny bit) less the way the sound comes out and makes the finished result of our granular composition/treatment.

But in the situations according to number 1, 2, and 4 we can store the generated grains away, and put them on our digital or sonic shelf for later usage:

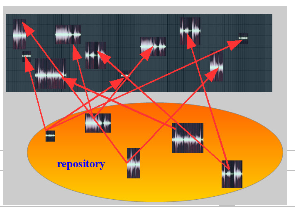

5. We can take or setup clusters of grains, and build up a whole piece of audible art, accumulating them, and arranging them like Lego bricks. We could do this even with single grains – what a cumbersome work it would be, if there wasn´t the possibility to program some code, which does this work for us, given that we can provide the program with a repository full of single grains, as well as with some rules how to pick them, and where to place them.

6. Or we can treat those clusters of grains (or single grains) more like pearls on a chain, crossfading consecutive “pearls”, sometimes changing the order on the chain, but always following only a serial approach. This is the way most (luckily not all) of the “ready-made” applications (VSTs, AUs etc.) do their work.

I can´t help pointing out, that the shorter a grain is, the less certain we perceive its frequency content, and – the other way round – the more we are able to clearly recognise the (frequency/spectral) content of a grain, the more we lose certainty about where in the original sound it precisely comes from. Isn´t it a whiff of quantum physics, that flashes in here?

At the latest when reading number 5 we realise, that we have to think of a way to characterise, and to classify the grains themselves.

to be continued

to part 1: ("A Short History of Granular Synthesis - Part 1"):https://www.dev.rofilm-media.net/node/340

to part 2: ("A Short History of Granular Synthesis - Part 2"): https://www.dev.rofilm-media.net/node/342

to part 3: ("A Short History of Granular Synthesis - Part 3"): https://www.dev.rofilm-media.net/node/346

to part 4: ("A Short History of Granular Synthesis - Part 4"): https://www.dev.rofilm-media.net/node/356

to part 5 ("In the World of Grains - Part 1"): https://www.dev.rofilm-media.net/node/364

to part 6 ("In the World of Grains - Part 2"): https://www.dev.rofilm-media.net/node/373

to part 7 (“In the World of Grains – Part 3”): https://www.dev.rofilm-media.net/node/378

to part 8: (“In the World of Grains – Part 4”): https://www.dev.rofilm-media.net/node/385

to part 9: (“In the World of Grains – Part 5”): https://www.dev.rofilm-media.net/node/390

to "In the World of Grains" part 7: https://www.dev.rofilm-media.net/node/407

to "In the World of Grains" part 8: https://www.dev.rofilm-media.net/node/414

to "in the World of Grains" part 9: https://www.dev.rofilm-media.net/node/421

Add new comment